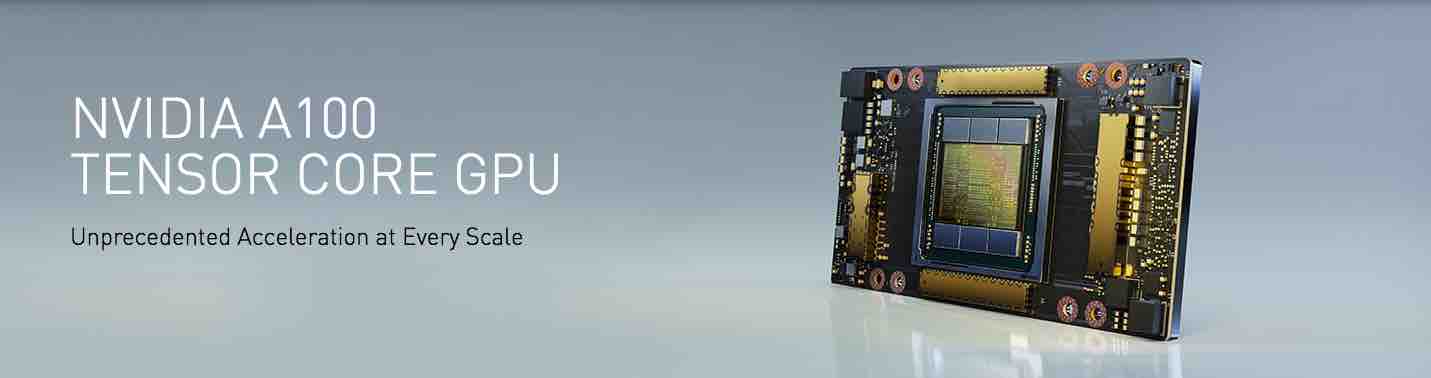

We are pleased to offer new Deep Learning servers based on the latest and most advanced A100 GPUs designed per NVIDIA’s Ampere architecture announced today. Our NVIDIA A100 servers are suitable for all AI workloads, offering unprecedented compute density, performance, and flexibility in the world’s first 5 petaFLOPS AI system. NVIDIA A100 servers feature the world’s most advanced accelerator, the NVIDIA A100 Tensor Core GPU, enabling enterprises to consolidate training, inference, and analytics into a unified, easy-to-deploy AI infrastructure that includes direct access to NVIDIA AI experts.

Dihuni’s A100 Deep Learning servers are fully customizable and built to order directly by manufacturers including Supermicro, HPE, Dell etc.

To checkout newly released models, please visit this page.

Key features of A100 Deep Learning Servers: