Above price is for informational purposes and includes 2 x AMD EPYC Milan 7313 16 Cores/64 Threads 2.45GHz CPU, 4x Nvidia A100 80 GB GPU with SXM4 NVLink, 8 x 32GB DDR4-3200 Memory (256GB total), 1 x 960GB NVMe M.2 SSD, 2 x 10GbE Ethernet ports.

For best pricing, please fill out the configuration form below. Educational and Inception program discounts available.

Key Features

- Manufacturer Part Number: AI-A100-SXM4-4NVE

- NVIDIA DGX A100 Server Architecture with:

- 4 x A100 NVlink 80GB GPU installed – NVIDIA® HGX™ A100 GPUs; Highest GPU Communication using NVIDIA® NVLINK™

- HGX-4 A100 GPU baseboard

- Direct connect PCI-E Gen4 Platform with NVIDIA® NVLink™

- On board BMC supports integrated IPMI 2.0 + KVM with dedicated 10G LAN

- Dual AMD EPYC™ 7003/7002 Series Processors

- 4 PCI-E Gen 4 x16 (LP), 1 PCI-E Gen 4 x8 (LP)

- 4 Hot-swap 2.5″ drive bays(SAS/SATA/NVMe Hybrid)

- 2x 2200W Redundant Power Supplies, Titanium Level + 4 Hot-swap heavy duty fans

- 4 Hot-swap heavy-duty cooling fans

- 2200W Redundant Platinum Level Power Supplies

- 3 Years Manufacturer’s Warranty

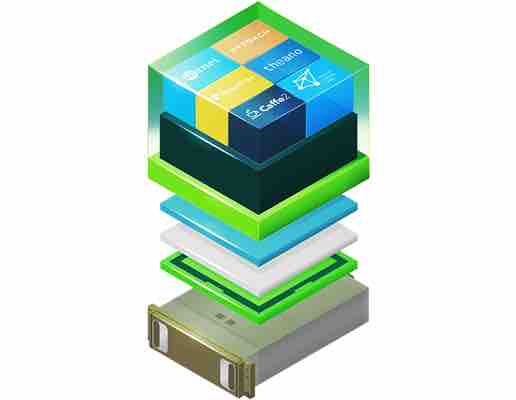

NVIDIA NGC Pre-Loaded (Optional)

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

Reviews

There are no reviews yet.