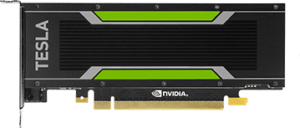

This product has been discontinued/EOL. Please consider the new Tesla T4 GPU from NVIDIA.

Ideal for your Advanced Digital Transformation Applications : Video Processing, Big Data, Hyperconverged Appliances, Internet of Things (IoT), In-Memory Analytics, Machine Learning (ML), Artificial Intelligence (AI) and intensive Data Center or Hyperscale Infrastructure Applications. The NVIDIA Tesla GPUs are very suitable for autonomous cars, molecular dynamics, computational biology, fluid simulation etc and even for advanced Virtual Desktop Infrastructure (VDI) applications.

In the new era of AI and intelligent machines, deep learning is shaping our world like no other computing model in history. Interactive speech, visual search, and video recommendations are a few of many AI-based services that we use every day. Accuracy and responsiveness are key to user adoption for these services. As deep learning models increase in accuracy and complexity, CPUs are no longer capable of delivering a responsive user experience. The NVIDIA Tesla P4 is powered by the revolutionary NVIDIA Pascal™ architecture and purpose-built to boost efficiency for scale-out servers running deep learning workloads, enabling smart responsive AI-based services. It slashes inference latency by 15X in any hyperscale infrastructure and provides an incredible 60X better energy efficiency than CPUs. This unlocks a new wave of AI services previous impossible due to latency limitations.

As a NVIDIA Preferred Solution Provider, we are authorized by the manufacturer and proudly deliver only original factory packaged products.

As a NVIDIA Preferred Solution Provider, we are authorized by the manufacturer and proudly deliver only original factory packaged products.

Key Features

- Manufacturer Part Number: 900-2G414-0000-000

- Sold and supported by NVIDIA

- Small form-factor, 50/75-Watt design fits any scaleout server

- Passively cooled board

- 8 GB GDDR5 memory

- INT8 operations slash latency by 15X.

- Delivers 21 TOPs (TeraOperations per second) of inference performance

- Hardware-decode engine capable of transcoding and inferencing 35 HD video streams in real time.

- Low Profile PCI-e bracket (for Full-height bracket please select option below for 900-2G414-0000-001)

- 3 Years Manufacturer’s Warranty

Ashish –

We ordered NVIDIA Tesla P4 GPU 8GB DDR5 and got immediate reply from Dihuni. Prompt shipping & updates on delivery. Highly recommended for any order.

M.P –

We got this in less than a week from Dihuni. Very fast service with regular updates, tracking information and great price on the gpu.

Arthur –

we are using this nvidia product for deep learning and it works great.

Tony –

the price point of the P4 GPU makes it very affordable and delivers the performance we need.

L.Y –

We are happy to find an authorized NVIDIA partner that delivers. I don’t recommend buying such high end product from online stores that sell this product without proper authorization from manufacturer. Dihuni kept us posted about our order and although our package shipped out a couple days after initial date, we were happy that we were properly informed. Nice job Dihuni!

Jennifer –

We have ordered this product from Dihuni multiple times and each time they have come through with on-time delivery and top service!!

D Chang –

We have installed these gpus in our Supermicro 4u servers and they are very good at reasonable price.

Shankar –

We ordered a couple of these from you. Your prices are better than competition. Keep up the nice work.