The Supermicro A+ Server AS -2124GQ-NART (AS-2124GQ-NART) is extremely suitable for Digital Transformation applications from natural speech by computers to autonomous vehicles, predictive analytics in manufacturing, smart cities, smart buildings etc. With the explosion in IT and IoT data, AI models are exploding in size. HPC applications are similarly growing in complexity as they unlock new scientific insights.

The 2124GQ-NART can be optimized to deliver the highest compute performance and memory for rapid model training. As a balanced data center platform for HPC and AI applications, Supermicro’s new 2U system leverages the NVIDIA HGX A100 4 GPU board with four direct-attached NVIDIA A100 Tensor Core GPUs using PCI-E 4.0 for maximum performance and NVIDIA NVLink™ for high-speed GPU-to-GPU interconnects. This advanced GPU system accelerates compute, networking and storage performance with support for one PCI-E 4.0 x8 and up to four PCI-E 4.0 x16 expansion slots for GPUDirect RDMA high-speed network cards and storage such as InfiniBand™ HDR™, which supports up to 200Gb per second bandwidth.

Key Features

- Manufacturer Part Number: AS -2124GQ-NART | AS-2124GQ-NART

- NVIDIA DGX A100 Server Architecture with:

- 4 x A100 80GB NVlink GPU installed – NVIDIA® HGX™ A100 GPUs; Highest GPU Communication using NVIDIA® NVLINK™

- HGX-4 A100 GPU baseboard

- Direct connect PCI-E Gen4 Platform with NVIDIA® NVLink™

- On board BMC supports integrated IPMI 2.0 + KVM with dedicated 10G LAN

- Dual AMD EPYC™ 7003 Series Processors

- 8TB Registered ECC DDR4 3200MHz SDRAM in 32 DIMMs

- 4 PCI-E Gen 4 x16 (LP), 1 PCI-E Gen 4 x8 (LP)

- 4 Hot-swap 2.5″ drive bays(SAS/SATA/NVMe Hybrid)

- 2x 2200W Redundant Power Supplies, Titanium Level + 4 Hot-swap heavy duty fans

- Ubuntu Linux OS installed

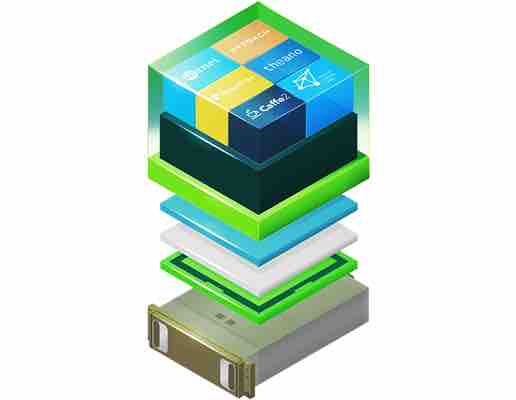

- Option for NGC Docker Container for Deep Learning Pre-loaded (Packages include NVIDIA Cuda Toolkit, NVIDIA DIGITS, NVIDIA TensorRT, Keras and NVCaffe, Caffe2, Microsoft Cognitive Toolkit (CNTK), MXNet, PyTorch, TensorFlow, Theano, Torch and others as needed

- 4 Hot-swap heavy-duty cooling fans

- 2200W Redundant Platinum Level Power Supplies

- 3 Years Manufacturer’s Warranty

NVIDIA NGC Pre-Loaded

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

Reviews

There are no reviews yet.