EOL. Unavailable. Please consider Tesla V100

Ideal for your Advanced Digital Transformation Applications : Video Processing, Big Data, Hyperconverged Appliances, Internet of Things (IoT), In-Memory Analytics, Machine Learning (ML), Artificial Intelligence (AI) and intensive Data Center, High Performance Computing (HPC) or Hyperscale Infrastructure Applications. The NVIDIA Tesla GPUs are very suitable for autonomous cars, molecular dynamics, computational biology, fluid simulation etc and even for advanced Virtual Desktop Infrastructure (VDI) applications.

In the new era of AI and intelligent machines, deep learning is shaping our world like no other computing model in history. Interactive speech, visual search, and video recommendations are a few of many AI-based services that we use every day. Accuracy and responsiveness are key to user adoption for these services. As deep learning models increase in accuracy and complexity, CPUs are no longer capable of delivering a responsive user experience. NVIDIA® Tesla® P100 GPU accelerators are the world’s first AI supercomputing data center GPUs. They tap into NVIDIA Pascal™ GPU architecture to deliver a unified platform for accelerating both HPC and AI. With higher performance and fewer, lightning-fast nodes, Tesla P100 enables data centers to dramatically increase throughput while also saving money. With over 500 HPC applications accelerated—including 15 out of top 15—as well as all deep learning frameworks, every HPC customer can deploy accelerators in their data centers.

As a NVIDIA Preferred Solution Provider, we are authorized by the manufacturer and proudly deliver only original factory packaged products. We strongly recommend against purchasing this or another NVIDIA product from unauthorized partners.

As a NVIDIA Preferred Solution Provider, we are authorized by the manufacturer and proudly deliver only original factory packaged products. We strongly recommend against purchasing this or another NVIDIA product from unauthorized partners.

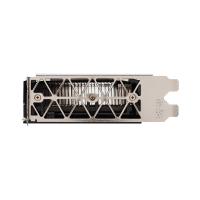

Key Features

- Sold and supported by NVIDIA

- Dual-slot 10.5 inch PCI Express Gen3 card

- 250W Max Power Consumption

- Passively cooled board

- 16GB Stacked Memory Capacity

- Manufacturer’s Part Number: 900-2H400-0000-000

Derek C –

Dihuni team was upfront about the discontinuance of this product and helped us in getting some urgent GPUs for AI lab use. Professional company.

Dihuni –

Thank you. Yes, the P100 EOL was announced earlier this year. The current last date to buy is December 26th. We appreciate your business.

S.M –

We debated between V100, P100 and P4 and settled on P100 because of price attractiveness compared to V100 and still getting great performance.

L.Y –

We are happy to find an authorized NVIDIA partner that delivers. I don’t recommend buying such high end product from online stores that sell this product without proper authorization from manufacturer. Dihuni kept us posted about our order and although our package shipped out a couple days after initial date, we were happy that we were properly informed. Nice job Dihuni!

N.T –

I requested expedited delivery and product was shipped next day.