Supermicro SYS-4029GP-TVRT | 4029GP-TVRT 8 x NVIDIA Tesla V100 SXM2 32GB NVLink GPU 2S Xeon 6248 20C 512GB DGX-1 comparable OptiReady Deep Learning Server

The Supermicro SuperServer SYS-4029GP-TVRT 8 GPU Deep Learning Server is similar to NVIDIA’s DGX-1 (DGX1). The DGX-1 and 4029GP-TVRT are built using the same NVIDIA architecture but the 4029GP-TVRT offers higher flexibility in configuration and extremely attractive price/performance. Just like the DGX-1, the 4029GP-TVRT comes complete with NVIDIA’s Deep Learning/AI NGC Docker software stack so you can start running your AI applications and training neural networks right away. This system has been deployed by our customers for maximum acceleration of highly parallel applications like Artificial Intelligence (AI), Deep Learning, Machine Learning, Autonomous Machines, Self-Driving Cars,Big Data Analytics, Internet of Things (IoT), Smart Cities, Oil & Gas Research,Computer Aided Design (CAD), Virtual/Augmented Reality, HPC, Virtualization, Database Processing, and General IT & Cloud Enterprise Applications.

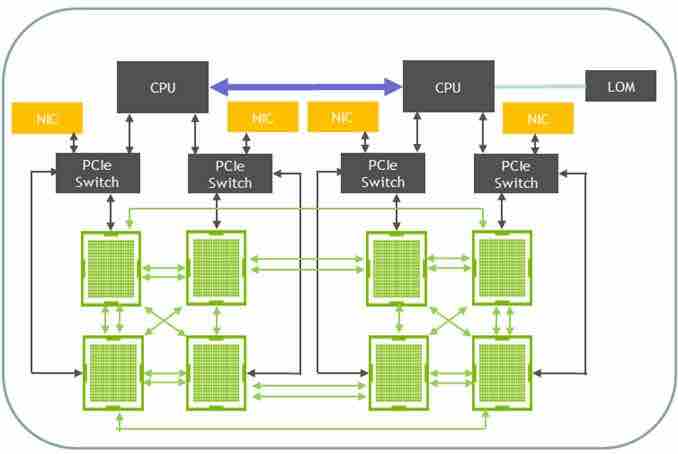

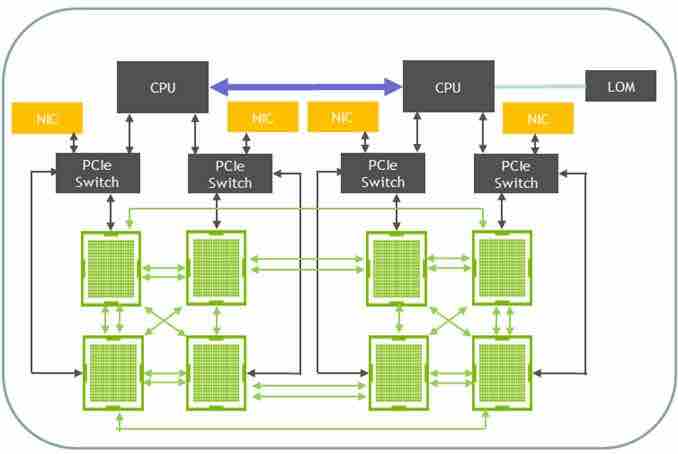

The 4029GP-TVRT system supports eight NVIDIA Tesla V100 32GB SXM2 GPU accelerators with maximum GPU-to-GPU bandwidth for cluster and hyper-scale applications. Incorporating the latest NVIDIA NVLink technology with over five times the bandwidth of PCI-E 3.0, this system features independent GPU and CPU thermal zones to ensure high performance and stability under the most demanding workloads.

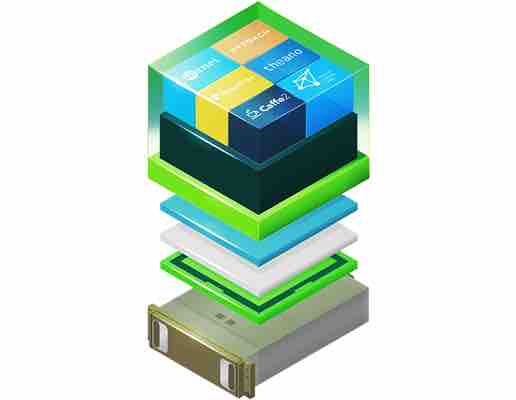

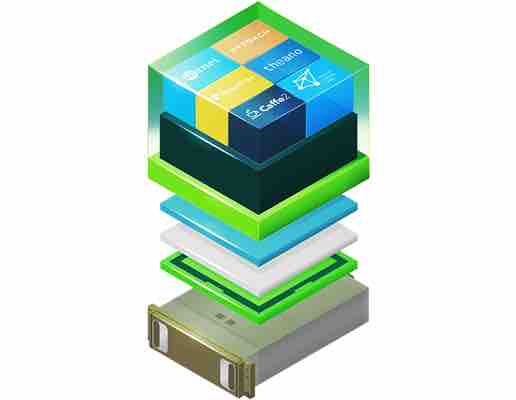

SYS-4029GP-TVRT with NVIDIA NGC Pre-Loaded

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

Key Features

Up to 8 NVIDIA Tesla V100 SXM2 32GB GPUs; Up to 300 GB/s GPU-to-GPU NVIDIA NVLINK; Optimized for NVIDIA GPUDirect RDMA

- 8 x NVIDIA Tesla V100 SXM2 32GB HBM2 NVLink GPU Installed

- 2 x Intel 6248 20Cores/40Threads 2.5GHz 150W CPU Installed

- 512GB (32GBx16) DDR4-2933 ECC Registered Memory Installed

- 1 x 960GB SATA3 6Gb/s,7.0mm,16nm,0.7 DWPD SSD Installed

- 16 Hot-swap 2.5″ SAS/SATA drives; Supports 8 NVMe drives

- 4 PCI-E 3.0 x16 (LP) (GPU tray for GPUDirect RDMA), 2 PCI-E 3.0 x16 (LP, CPU tray)

- Dual 10GBase-T LAN with Intel® X550

- 8x 92mm cooling fans

- 2200W Redundant (2 2) Power Supplies; Titanium Level (96% )

- Ubuntu Linux (latest compatible version) installed

- NGC Docker Container for Deep Learning Pre-loaded (Optional, please select below)

- Manufacturer’s Part Number: SYS-4029GP-TVRT

Manufacturer’s Product Page: https://www.supermicro.com/en/products/system/4U/4029/SYS-4029GP-TVRT.cfm

Supermicro SYS-4029GP-TVRT NVLink Performance

The Supermicro SuperServer SYS-4029GP-TVRT GPU Deep Learning Server is similar to NVIDIA’s DGX-1. The DGX-1 and 4029GP-TVRT are built using the same NVIDIA architecture but the 4029GP-TVRT offers higher flexibility in configuration and extremely attractive price/performance. Just like the DGX-1, the 4029GP-TVRT comes complete with NVIDIA’s Deep Learning/AI NGC Docker software stack so you can start running your AI applications and training neural networks right away. This system has been deployed by our customers for maximum acceleration of highly parallel applications like Artificial Intelligence (AI), Deep Learning, Machine Learning, Autonomous Machines, Self-Driving Cars,Big Data Analytics, Internet of Things (IoT), Smart Cities, Oil & Gas Research,Computer Aided Design (CAD), Virtual/Augmented Reality, HPC, Virtualization, Database Processing, and General IT & Cloud Enterprise Applications. The 4029GP-TVRT system supports eight NVIDIA Tesla V100 32GB SXM2 GPU accelerators with maximum GPU-to-GPU bandwidth for cluster and hyper-scale applications. Incorporating the latest NVIDIA NVLink technology with over five times the bandwidth of PCI-E 3.0, this system features independent GPU and CPU thermal zones to ensure high performance and stability under the most demanding workloads.

Supermicro SYS-4029GP-TVRT comes with multiple Tesla V100 GPUs and is used in a variety of industries as developers rely on more parallelism in applications like AI computing. These include 4-GPU and 8-GPU system configurations using PCIe system interconnect to solve very large, complex problems. But PCIe bandwidth is increasingly becoming the bottleneck at the multi-GPU system level, driving the need for a faster and more scalable multiprocessor interconnect. NVIDIA® NVLink™ technology addresses this interconnect issue by providing higher bandwidth, more links, and improved scalability for multi-GPU and multi-GPU/CPU system configurations. A single NVIDIA Tesla® V100 GPU supports up to six NVLink connections and total bandwidth of 300 GB/sec—10X the bandwidth of PCIe Gen 3.

Key Features

Up to 8 NVIDIA Tesla V100 SXM2 32GB GPUs; Up to 300 GB/s GPU-to-GPU NVIDIA NVLINK; Optimized for NVIDIA GPUDirect RDMA

- 8 x NVIDIA Tesla V100 SXM2 32GB HBM2 NVLink GPU Installed

- 2 x Intel 6248 20Cores/40Threads 2.5GHz 150W CPU Installed

- 512GB (32GBx16) DDR4-2933 ECC Registered Memory Installed

- 1 x 960GB SATA3 6Gb/s,7.0mm,16nm,0.7 DWPD SSD Installed

- 16 Hot-swap 2.5″ SAS/SATA drives; Supports 8 NVMe drives

- 4 PCI-E 3.0 x16 (LP) (GPU tray for GPUDirect RDMA), 2 PCI-E 3.0 x16 (LP, CPU tray)

- Dual 10GBase-T LAN with Intel® X550

- 8x 92mm cooling fans

- 2200W Redundant (2 2) Power Supplies; Titanium Level (96% )

- Ubuntu Linux (latest compatible version) installed

- NGC Docker Container for Deep Learning Pre-loaded (Optional, please select below)

- Manufacturer’s Part Number: SYS-4029GP-TVRT

“On initial internal benchmark tests, our 4029GP-TVRT system was able to achieve 5,188 images per second on ResNet-50 and 3,709 images per second on InceptionV3 workloads,” said Charles Liang, President and CEO of Supermicro. “We also see very impressive, almost linear performance increases when scaling to multiple systems using GPU Direct RDMA. With our latest innovations incorporating the new NVIDIA V100 32GB PCI-E and V100 32GB SXM2 GPUs with 2X memory in performance-optimized 1U and 4U systems with next-generation NVLink, our customers can accelerate their applications and innovations to help solve the world’s most complex and challenging problems.”

“Supermicro designs the most application-optimized GPU systems and offers the widest selection of GPU-optimized servers and workstations in the industry. Our high performance computing solutions enable deep learning, engineering and scientific fields to scale out their compute clusters to accelerate their most demanding workloads and achieve fastest time-to-results with maximum performance per watt, per square foot and per dollar. With our latest innovations incorporating the new NVIDIA V100 PCI-E and V100 SXM2 GPUs in performance-optimized 1U and 4U systems with next-generation NVLink, our customers can accelerate their applications and innovations to help solve the world’s most complex and challenging problems.”

Charles Liang, President and CEO of Supermicro

Technical Specifications

| Mfr Part # |

SYS-4029GP-TVRT |

| Super X11DGO-T |

Super X11DGO-T |

| CPU |

Dual Socket P (LGA 3647); Dual Socket P (LGA 3647); Intel® Xeon® Scalable Processors,

3 UPI up to 10.4GT/s; Intel® Xeon® Scalable Processors,; 3 UPI up to 10.4GT/s; Support CPU TDP 70-205W; Support CPU TDP 70-205W 2 x Intel 6248 20Cores/40Threads 2.5GHz 150W CPU Installed |

| Cores |

Up to 28 Cores with Intel® HT Technology |

| GPU |

8 x NVIDIA Tesla V100 SXM2 32GB HBM2 NVLink GPU Installed |

| Memory Capacity |

24 DIMM slots; 24 DIMM slots; Up to 3TB ECC 3DS LRDIMM, 1TB ECC RDIMM, DDR4 up to 2666MHz; Up to 3TB ECC 3DS LRDIMM, 1TB ECC RDIMM, DDR4 up to 2666MHz

512GB (32GBx16) DDR4-2933 ECC Registered Memory Installed |

| Memory Type |

2666/2400/2133MHz ECC DDR4 SDRAM |

| Chipset |

Intel® C621 chipset |

| SATA |

SATA3 (6Gbps) with RAID 0, 1, 5, 10 |

| Network Controllers |

Dual Port 10GBase-T from Intel X550 Ethernet Controller |

| IPMI |

Support for Intelligent Platform Management Interface v.2.0; IPMI 2.0 with virtual media over LAN and KVM-over-LAN support |

| Graphics |

ASPEED AST2500 BMC |

| SATA |

8 SATA3 (6Gbps) ports; 8 SATA3 (6Gbps) ports |

| LAN |

2 RJ45 10GBase-T LAN ports; 1 RJ45 Dedicated IPMI LAN port |

| USB |

2 USB 3.0 ports (front) |

| Video |

1 VGA Connector |

| COM Port |

1 COM port (header) |

| BIOS Type |

AMI 32Mb SPI Flash ROM |

| BIOS Features |

Intel® Node Manager; IPMI 2.0; IPMI 2.0; KVM with dedicated LAN; SSM, SPM, SUM; SuperDoctor® 5; Watchdog |

| Form Factor |

4U Rackmountable; Rackmount Kit (MCP-290-00057-0N) |

| Model |

CSE-R422BG-1 |

| Height |

7.0″ (178mm) |

| Width |

17.6″ (447mm) |

| Depth |

31.7″ (805mm) |

|

Net Weight: 80 lbs (36.2 kg); Gross Weight: 135 lbs (61.2 kg) |

| Available Colors |

Black |

| Hot-swap |

16 Hot-swap 2.5″ SAS/SATA drive bays; 16 Hot-swap 2.5″ SAS/SATA drive bays

1 x 960GB SATA3 6Gb/s,7.0mm,16nm,0.7 DWPD SSD Installed |

| PCI-Express |

4 PCI-E 3.0 x16 (LP, GPU tray for GPUDirect RDMA) slots; 2 PCI-E 3.0 x16 (LP, CPU tray) slots |

| Storage |

16-port 2U SAS3 12Gbps hybrid backplane, support up to 8x 2.5″ SAS3/SATA3 HDD/SSD and 8x SAS3/SATA3/NVMe Storage Devices |

| Fans |

8x 92mm Cooling Fans; 4x 80mm Cooling Fans |

| Power Supply |

2200W Redundant Titanium Level Power Supplies with PMBus |

| Total Output Power |

1200W/1800W/1980W/2090W/2200W

(UL/cUL only); 1200W/1800W/1980W/2090W/2200W; (UL/cUL only) |

Dimension

(W x H x L) |

106.5 x 82.4 x 203.5 mm |

| Input |

1200W: 100-127 Vac / 14-11 A / 50-60 Hz; 1800W: 200-220 Vac / 10-9.5 A / 50-60 Hz; 1980W: 220-230 Vac / 10-9.5 A / 50-60 Hz; 2090W: 230-240 Vac / 10-9.8 A / 50-60 Hz; 2200W: 220-240 Vac / 12-11 A / 50-60 Hz (UL/cUL only); 2090W: 180-220 Vac / 14-11 A / 50-60 Hz (UL/cUL only); 2090W: 230-240 Vdc / 10-9.8 A (CCC only) |

| +12V |

Max: 100A / Min: 0A (1200W); Max: 150A / Min: 0A (1800W); Max: 165A / Min: 0A (1980W); Max: 174.17A / Min: 0A (2090W); Max: 183.3A / Min: 0A (2200W); Max: 174.17A / Min: 0A (2090W) |

| 12Vsb |

Max: 2A / Min: 0A |

| Output Type |

Gold Finger (connector on M/P) |

| Certification |

UL/cUL/CB/BSMI/CE/CCC |

| RoHS |

RoHS Compliant |

| Environmental Spec. |

Operating Temperature:

10°C ~ 35°C (50°F ~ 95°F); Non-operating Temperature:

-40°C to 60°C (-40°F to 140°F); Operating Relative Humidity:

8% to 90% (non-condensing); Non-operating Relative Humidity:

5% to 95% (non-condensing) |

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

This Deep Learning server is available with NVIDIA NGC containers that can be preloaded. NGC empowers researchers, data scientists, and developers with performance-engineered containers featuring AI software like TensorFlow, Keras, PyTorch, MXNet, NVIDIA TensorRT™, RAPIDS and more. These pre-integrated containers feature NVIDIA AI stack including NVIDIA® CUDA® Toolkit, NVIDIA deep learning libraries which are easy to upgrade using Docker commands.

Michael W –

Dihuni helped us define our Deep Learning Server to include Xeon CPUs and Tesla V100 GPUs. We received the fully configured system on time and are very happy with Dihuni as our partner.

J.L –

We were originally considering a DGX1. After careful comparison of cost and features we bought two of these servers from Dihuni. We saved considerably compared to DGX1 and received the systems loaded with Ubuntu and NVIDIA NGC docker software which included Tensorflow and other tools we needed. All in just 2 weeks from order. Dihuni made it easy to understand the benefits of this server and also involved Super Micro engineering team for discussion before we made the purchase. Great company to work with and we will purchase more of these in our second phase of project.

Jim –

Can I get this with only 4 GPU?

Dihuni –

Yes, you can buy the following configuration with 4 Tesla V100 GPUs or send us an email at digital@dihuni.com for custom configuration.

https://www.dihuni.com/shop/servers/servers-by-manufacturer/supermicro-servers/dihuni-optiready-supermicro-4029gp-tvrt-v4-1-4-x-nvidia-tesla-v100-sxm2-32gb-nvlink-gpu-2-x-xeon-gold-5120-128gb-960gb-ssd-2x10gb-deep-learning-server/