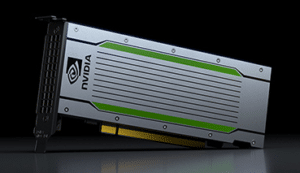

We’re pleased to announce the availability of the new NVIDIA Tesla T4 GPU. This is a great addition to an already existing powerful portfolio of NVIDIA GPUs for Deep Learning. NVIDIA Tesla GPUs are used for Video Processing, Big Data, Hyperconverged Appliances, Internet of Things (IoT), In-Memory Analytics, Machine Learning (ML), Artificial Intelligence (AI) and intensive Data Center, High Performance Computing (HPC) or Hyperscale Infrastructure Applications. NVIDIA Tesla GPUs are very suitable for autonomous cars, molecular dynamics, computational biology, fluid simulation etc and even for advanced Virtual Desktop Infrastructure (VDI) applications.

The NVIDIA Tesla T4 GPU has been positioned as world’s most advanced inference accelerator. Deep Learning requires both neural network training and inference. While training neural networks through machine learning algorithms improves accuracy, inference is needed to draw meaningful insights. In his blog, Michael Copeland paints the differences between training and inference clearly.

Powered by NVIDIA Turing Tensor Cores, T4 brings revolutionary multi-precision inference performance to accelerate the diverse applications of modern AI. Packaged in an energy-efficient 70-watt, small PCIe form factor, T4 is optimized for scale-out servers and is purpose-built to deliver state-of-the-art inference in real time.

Responsiveness is key to user engagement for services such as conversational AI, recommender systems, and visual search. As models increase in accuracy and complexity, delivering the right answer right now requires exponentially larger compute capability. Tesla T4 delivers up to 40X times better low-latency throughput, so more requests can be served in real time.

The Tesla T4 is available for immediate purchase at Dihuni.com. Please visit the T4 product page for more information. We will also be announcing Dihuni OptiReady systems based on the T4 GPU.